Rawstudio 2.0 Technical Improvements pt 1

In this post we look at the major technical changes that has been made through the development of Rawstudio 2.0.

Structural changes

The first thing that started for Rawstudio 2.0 was a complete re-structuring of all internal image processing. Even though Rawstudio 1.x was working ok, image processing was becoming increasingly difficult to extend and work with.

So a major step was the creation of image processing and loading plug-ins. All internal functions were torn completely apart and split into separate filters that work relatively independent of each other.

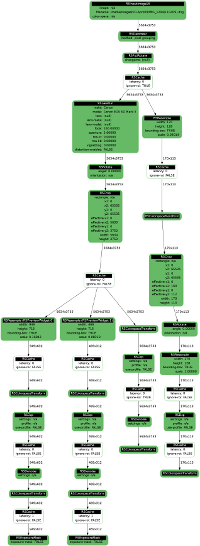

This work, done almost two years ago laid the foundation of a complete rewrite of most of the internal functions, and has given great transparency for development overall. Below you can see a visual representation of the current filter graph in Rawstudio, which is responsible for all image rendition.

I will not go into a detailed explanation of the graph and the plugin structure, but the different outputs are attached to the lower ends of the graph, and request images upward in their chain, where the upmost is the raw file loader.

I will now continue to give a little technical detail on each of the parts involved from loading a Raw file and until it is displayed on the screen, and give a little detail on what has changed for Rawstudio 2.0.

Loader

We have been meaning to re-write the basic raw loader for quite some time. Until now we have relied on dcraw to do all of the basic raw decoding, but we wanted to see if it was possible to squeeze more speed out of the decoding process, so RawSpeed was born. It is a decoder that is independent of Rawstudio, but it is included by default.

Here are some of the times for a full decode of a RAW file for each particular format. They measure the time from the loaded data is handed to RawSpeed until the decompressed data are returned to Rawstudio, which includes an image value scale from x bit to 16 bit per component.

The times are on a Quad-core Intel Core 2 Q6600.

DNG from Adobe DNG Converter (compressed): 90 MP/sec

DNG from Adobe DNG Converter (uncompressed): >300 MP/sec

Canon CR2, ordinary RAW: 45 MP/sec.

Canon CR2, sRAW/mRaw: 60 MP/sec.

Nikon NEF Compressed: 45 MP/sec.

Nikon NEF Uncompressed: >300 MP/sec

Sony ARW (compressed): 30MP/sec

Sony ARW2 (compressed/uncompressed): >200MP/sec

Olympus ORF (compressed): 30 MP/sec

Panasonic RW2 (compressed): >150MP/sec

Pentax PEF (compressed): 45MP/sec

Pentax DNG (compressed): 40MP/sec

So if you have a 15 Megapixel Panasonic camera, it should be capable of decoding 10 images per second, if your harddisk can keep up :)

For cameras not on this list, Rawstudio will still fall back to dcraw for decoding.

In the real world that gives a usage scenario that look like this:

“Proofing” export.

Setup: Export 100 images in max 1600 x 1600 pixels in JPG. All Rawstudio functions used: DCP profile, Lens Distortion correction enabled, sharpen & denoise enabled. The images are from a Canon EOS 20D (8 Megapixel camera).

Total time for 100 images: 140 seconds – that is 1.4 seconds for each image.

Full export.

Setup: Export 100 images in full size JPG – same settings as above

Total time for 100 images: 300 seconds – that is 3 seconds per image or 2.6 Megapixels per second.

More “exotic” cameras are supported through fallback to dcraw. A list of supported cameras by the RawSpeed loader can be found here:

http://rawspeed.klauspost.com/supported/cameras.xml

Demosaic & Hotpixel removal

The basic demosaic routine from Rawstudio 1 has been maintained, since it was fairly fast and provides excellent overall quality. It has however been optimized and multithread-enabled, so it will now utilize all available cores for demosaic, resulting in an almost linear speedup based on how many cores your CPU has.

There has been implemented a very simple and fast “preview” demosaic, that will be used for the first time the picture is shown.

An automatic hot-pixel removal has been added to this stage of the process, and it will detect and remove stuck or dead pixels automatically. Since this is an automatic process it is set very conservatively to avoid removing actual image details – this means it will not remove pixels that are only slightly hotter than the surrounding pixels for instance. It should however still be able to detect sudden single pixel errors, and take out ugly single pixels in skies, black areas and similar.

Here a part of an image, taken with a very bad sensor, that has a lot of hot pixels:

And here is the same part of same image after the automatic hotpixel removal – click the image to see the actual difference:

This is a pretty optimal situation for the algorithm. Not much detail and fully saturated hot pixels. It cannot be used as a general solution, if your sensor is defective, or simply generates a lot of hot pixels on long exposures. But it should help you out if you have a few stuck pixels that are clearly visible in a blue sky for example.

Technically the hotpixel removal works this:

- Calculate the minimal absolute difference between this pixel and the pixel, placed two pixels (in CFA terms) to the left, right, up and down. (Due to the current CFA layout of sensors, this will always be the same color as the current).

- Calculate the maximum absolute difference between the pixel directly on the left and directly on the right, so the same for the pixels above and below.

- If the difference between the two tests above are more than a factor of 8, and larger than an absolute number, do an extended test, otherwise leave the pixel alone.

- In the extended test, do the same as above, but move the test pixels two pixels further away.

- If the difference is still larger than the threshold we have a single pixel that is very different from the surrounding pixels, in an area with very little detail, so we assume it is a hot/dead pixel, and interpolate it away.

We have done an extended testing to adjust the threshold, so we don’t get any false hits, and are fairly confident, that it will not introduce image degradation in ordinary circumstances.

Next up

In the next part we will have a look at the image processing that has a more direct influence on how your image will look.

Nice overview, and thanks for the update. I’ll briefly pretend to speak for everyone and say that this is exactly the kind of insight into the development process that we want: it’s a good combination of broad overview and interesting details, and it’s all stuff that’s relevant to a large number of users (even if there’s a fair amount of geekery that’s not essential). Keep it up!

[…] we continue to look the technical improvements of Rawstudio 2.0. In part 1 we had a look at the structure and the first few automatic steps of processing the image. We now […]

[…] Overall re-write of image processing engine to have a render graph for better speed and flexibility [1] […]